Are women punished for using AI?

The hidden reputation cost, social stigma, and bias inside the machine

Are women really punished for using AI? Let us discover how this complex system works.

Artificial intelligence is becoming the new default interface for work.

It writes emails, summarizes meetings, drafts reports, and helps people code, design, and plan.

In theory, AI should be a great equalizer:

- It lowers barriers to expertise

- It speeds up learning

- It reduces technical gatekeeping

But a growing body of research suggests something more complicated is happening.

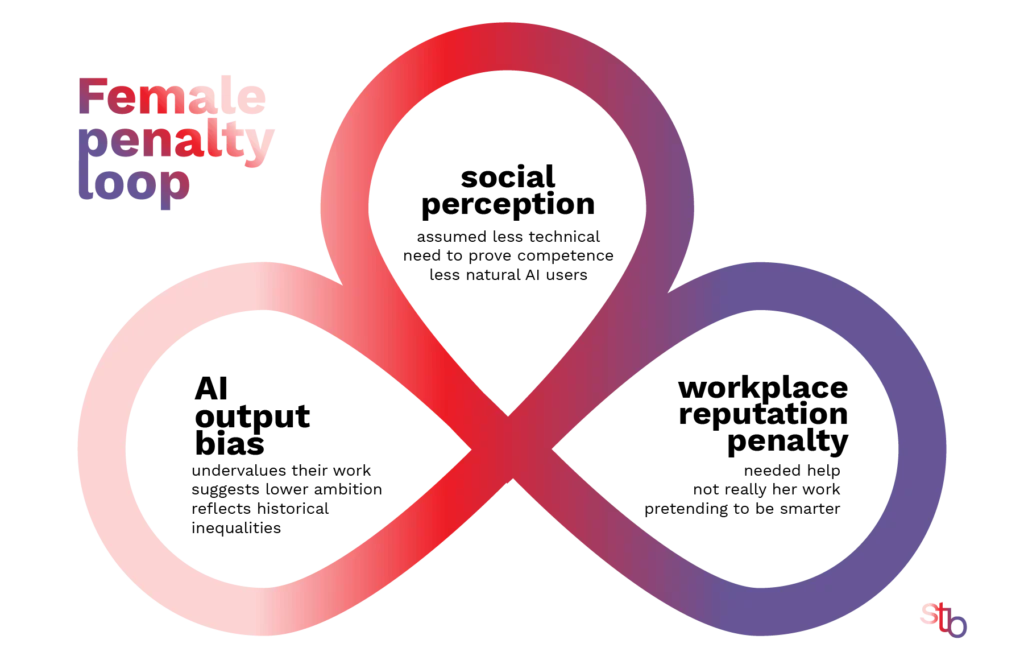

The system penalizes women both for using AI and by the outputs it produces.

And this penalty doesn’t happen in just one place.

It happens in three overlapping layers:

- Workplace reputation

- Social perception

- Bias inside AI outputs themselves

Together, these layers create a subtle but powerful loop, so yes, women are punished for using AI.

Layer 1: The workplace reputation penalty

Several recent studies show that people are judged as less competent when they use AI, even if the final work is identical. Which we have to add, is equally true for men and women.

One widely discussed research paper describes this as a social evaluation penalty for AI use. People anticipate being seen as less capable or less motivated if they admit to using AI, which can even reduce their willingness to use it.

But for women, this effect can be stronger.

Experimental work suggests:

- AI users are rated as less competent

- The penalty is often larger for women than for men

- Identical work is judged differently depending on gender (Forbes)

Same behavior, different interpretation

| Situation | Man using AI | Women using AI |

|---|---|---|

| Uses AI for a report | “Efficient and strategic” | “Needed help” |

| Uses AI to code | “Smart productivity boost” | “Not technical enough” |

| Uses AI for ideas | “Innovative thinker” | “Can’t think independently” |

This isn’t new bias.

It’s the old competence double standard, now attached to a new tool.

Women in many workplaces already:

- face higher proof requirements

- receive less credit for identical work

- are scrutinized more for shortcuts

AI simply becomes a new signal people interpret through those old expectations.

When a man uses AI, he’s “streamlining workflow.”

When a woman does, she’s “taking shortcuts.”

Layer 2: “Authenticity” Problem & Social Perception

Beyond performance metrics, productivity dashboards, and formal evaluations, there is another layer that quietly shapes careers and confidence: social judgment.

AI use doesn’t exist in a neutral cultural space. We don’t perceive it as “just another tool.” Instead we frame it morally.

- Is it cheating?

- Is it lazy?

- Is it real work?

These questions don’t only reflect concerns about fairness or quality. Instead they reveal something deeper: our beliefs about effort, talent, and authenticity. And crucially, those beliefs are not gender-neutral.

Cultural expectations shape perception

For men:

- “He’s optimizing.”

- “He’s using tools.”

- “He’s ahead of the curve.”

For women:

- “She’s faking it.”

- “That’s not really her work.”

- “She’s pretending to be smarter.”

This reflects a deeper social pattern:

Men are judged more on performance.

Women are judged more on authenticity.

We expected women to be genuine, natural, effortful and honest about their abilities. But

| when a woman uses AI | when a man uses AI |

|---|---|

| it violates the “natural” expectation | it confirms the “efficient problem-solver” stereotype |

| her competence is questioned |

Same action. Different meaning.

Layer 3: Output bias — when AI itself disadvantages women

The third layer is inside the systems themselves.

AI models learn from historical data, internet text, institutional records and past decisions. All of which contain real-world gender inequalities. So AI often reflects those inequalities, normalizes them or subtly amplifies them. Which is a big problem for women.

Real-world example: health and care bias

A 2025 study reported by The Guardian found that AI tools used by English councils downplayed women’s health issues compared to identical male cases.

In the study:

- The same case notes were entered into the AI

- Only the gender was changed

- Women’s cases were described as less severe

You can read the article here:

AI tools used by English councils downplay women’s health issues, study finds

Researchers warned this could lead to unequal care provision, because decisions depend on perceived need.

Other commonly reported bias patterns

Across different studies and audits:

- Women receive lower salary suggestions

- Career advice is more risk-averse

- Leadership language differs by gender

- Achievements are described less strongly for women

Even when the human evaluator is fair, the AI output can introduce bias before the evaluation even begins.

Are women punished for using AI? Yes

AI doesn’t create new gender bias.

It gives old bias a new interface.

And because AI is becoming the main medium of work, its effects are larger, faster, and harder to see.

But AI could help women more than men

Here’s the twist.

Some research suggests AI:

- helps lower-confidence workers more

- reduces skill gaps in certain tasks

- democratizes access to expertise

That means AI could level the playing field, accelerate women’s careers and reduce gatekeeping. But only if using AI isn’t stigmatized, outputs are fair and workplaces normalize it. Otherwise, the social penalty cancels out the technical benefit.

Reference Articles:

Women Who Use AI At Work Face A Predictable ‘Competence Penalty’

Women Are Judged Twice as Hard for Using AI

When an AI algorithm is labeled ‘female,’ people are more likely to exploit it

AI chatbots might be sabotaging women by advising them to ask for lower salaries, study says

Women Are Avoiding AI. Will Their Careers Suffer?

You might also like:

10 Actions to Make AI More Female

Women are falling back on AI, here is what you can do

Make the World a Better Place for Women in 5 Minutes a Day